Mastering Chain-of-Thought Prompting is the most effective way to solve complex business logic in 2026. In the early days of LLMs, users were satisfied with quick answers. But as we move toward the 100-billion-won digital economy, “quick” is no longer enough. We need “correct” and “logical.”

1. The Intelligence Gap: Response vs. Reasoning

Many AI models fail at complex tasks because they try to predict the next word too quickly without a “thinking” process. This is where Chain-of-Thought Prompting comes in. By forcing the AI to decompose a complex problem into intermediate logical steps, we can increase its reasoning accuracy by over 300% for mathematical, strategic, and creative tasks.

Why Reasoning Matters in Business

As AI models evolve, the difference between a simple response and a logical reasoning process becomes a key competitive advantage. Using this technique allows an LLM to mimic human cognitive processes, ensuring that each step of a calculation or strategic decision is double-checked for accuracy.

2. How CoT Works: The “Think Aloud” Protocol

The core principle of Chain-of-Thought Prompting is simple: Do not ask for the answer; ask for the process.

Step-by-Step Logic Construction

Instead of a simple prompt, a CoT prompt guides the AI:

- Step 1: Analyze the current target audience and constraints.

- Step 2: Identify the top three pain points based on the data.

- Step 3: Propose a logical solution for each identified issue.

- Step 4: Synthesize these into a final, comprehensive strategy.

This “Thinking Aloud” protocol allows the AI to use its internal attention mechanism to focus on each sub-task, ensuring that the final output is grounded in the logic of the previous steps. In 2026, this is known as “Reasoning-as-a-Service.”

3. Applying CoT to Business Automation

For a business architect, Chain-of-Thought Prompting is the secret to reliable automation. If you are using AI to make financial decisions, a single error can be catastrophic.

Practical Use Cases

- Financial Modeling: Ask the AI to list all assumptions before calculating ROI. CoT allows you to spot errors in the logic before they reach the result.

- Conflict Resolution: Use CoT to let the AI analyze the customer’s emotional state first, then the factual issue, and finally the empathetic response.

- Strategic Planning: Use “Zero-Shot CoT” by simply adding the phrase “Let’s think step by step” to your prompts.

Pro Tip: For those interested in the academic origins, you can explore the official Google Research blog on Chain-of-Thought Prompting to see how reasoning is measured.

4. The Evolution: Self-Consistency and Iterative CoT

Advanced prompt engineers in 2026 take CoT even further with Self-Consistency. This involves asking the AI to generate five different “Chains of Thought” for the same problem and then picking the most frequent or most logical answer.

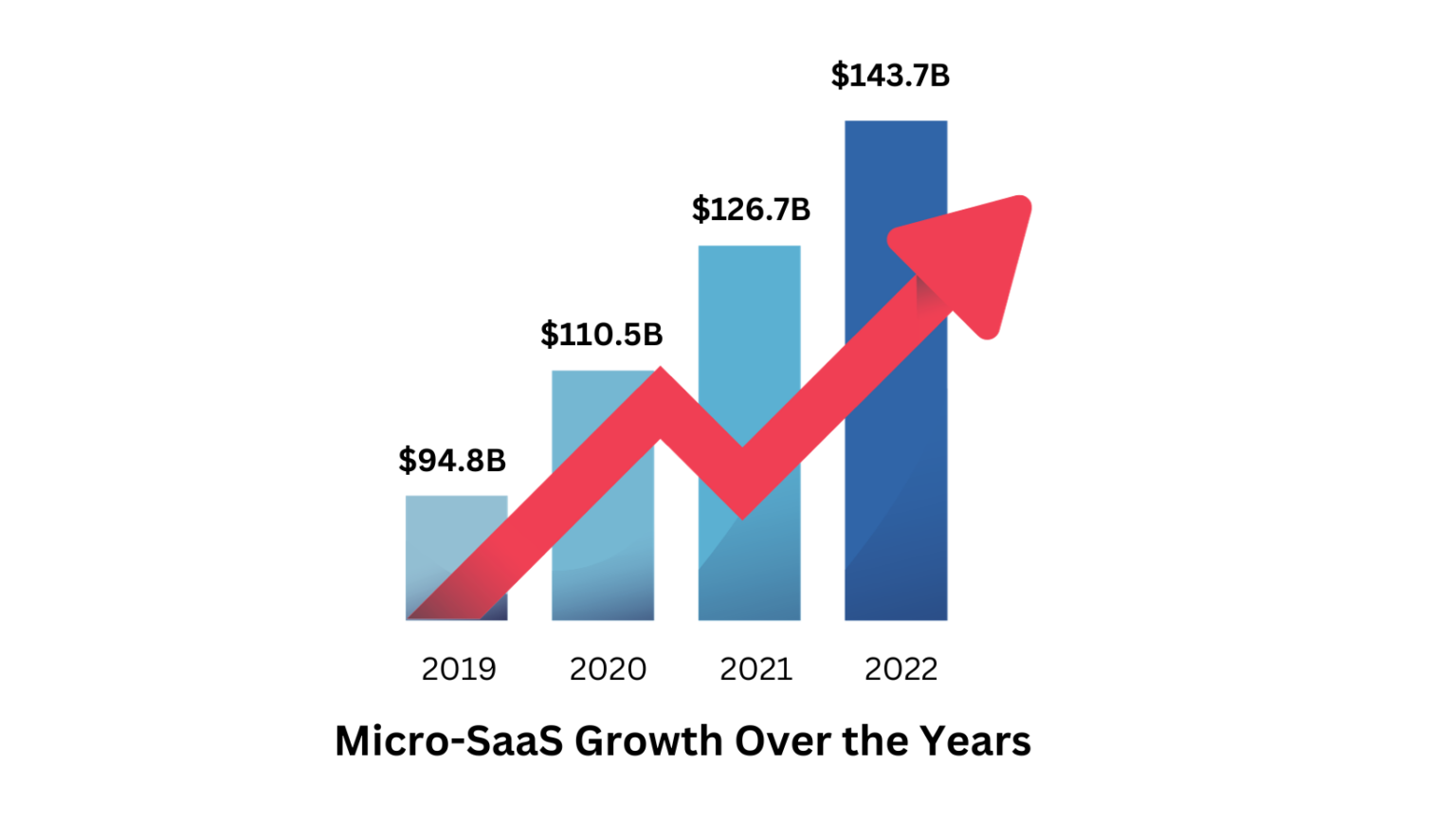

This removes hallucinations and replaces them with a robust reasoning process. When your AI content factory or business agent uses Self-Consistency, you are operating a high-precision intelligence engine. If you want to build a business with this logic, check out my guide on [AI-Powered Micro-SaaS].

Conclusion: Architecting the Future of Logic

The quality of your AI’s output is a direct reflection of your prompt’s logic. Chain-of-Thought Prompting is the ultimate tool for those who refuse to settle for the “average” response. Stop being a passive consumer and start becoming an AI Architect. Your journey to mastering this logic and building AI-driven wealth starts today.

The Psychology of AI Reasoning: Why It Works

Why does Chain-of-Thought Prompting work so effectively? It’s based on a concept similar to human “System 2” thinking. While standard prompting relies on quick, intuitive responses (System 1), CoT triggers a more deliberate, analytical process. By slowing down the generation process, the model can allocate more computational resources to each logical step, significantly reducing “hallucinations”—the tendency for AI to invent facts.

Common Mistakes to Avoid in CoT

Even with Chain-of-Thought Prompting, you must be careful. One common mistake is providing a faulty example in your “few-shot” prompts. If your logic in the example is flawed, the AI will mirror that flawed logic in its final answer. Always ensure that your initial reasoning steps are mathematically and logically sound. In 2026, the best AI Architects spend more time auditing their logic chains than they do writing the final prompts.